I'll start with a confession.

There are days I use AI to think for me. Not to assist my thinking - to replace it. I get a clean, confident answer and I move on, feeling vaguely productive. It took me a while to notice that I was finishing those days knowing less than I started them. Not because the answers were wrong. But because I'd skipped the part where I wrestle with something. And that wrestling, it turns out, is the whole point.

That discomfort is what this article is about.

The Gap Was Always There

Let's be honest about something we don't often say out loud: cognitive ability, curiosity, and critical thinking have never been evenly distributed. Some people love sitting with hard questions. Others prefer quick answers. Some instinctively interrogate what they read. Others accept it. This isn't a moral distinction - it's just human variation, shaped by education, environment, habit, and a hundred other factors.

For all of history, this gap existed. But it had a kind of natural friction. Shallow thinking got exposed. You'd run out of depth in a conversation. You'd be wrong in a way you couldn't smooth over. The gap was real, but it was also somewhat permeable. Effort, curiosity, and exposure could move people across it.

That friction is disappearing.

AI as the Great Multiplier

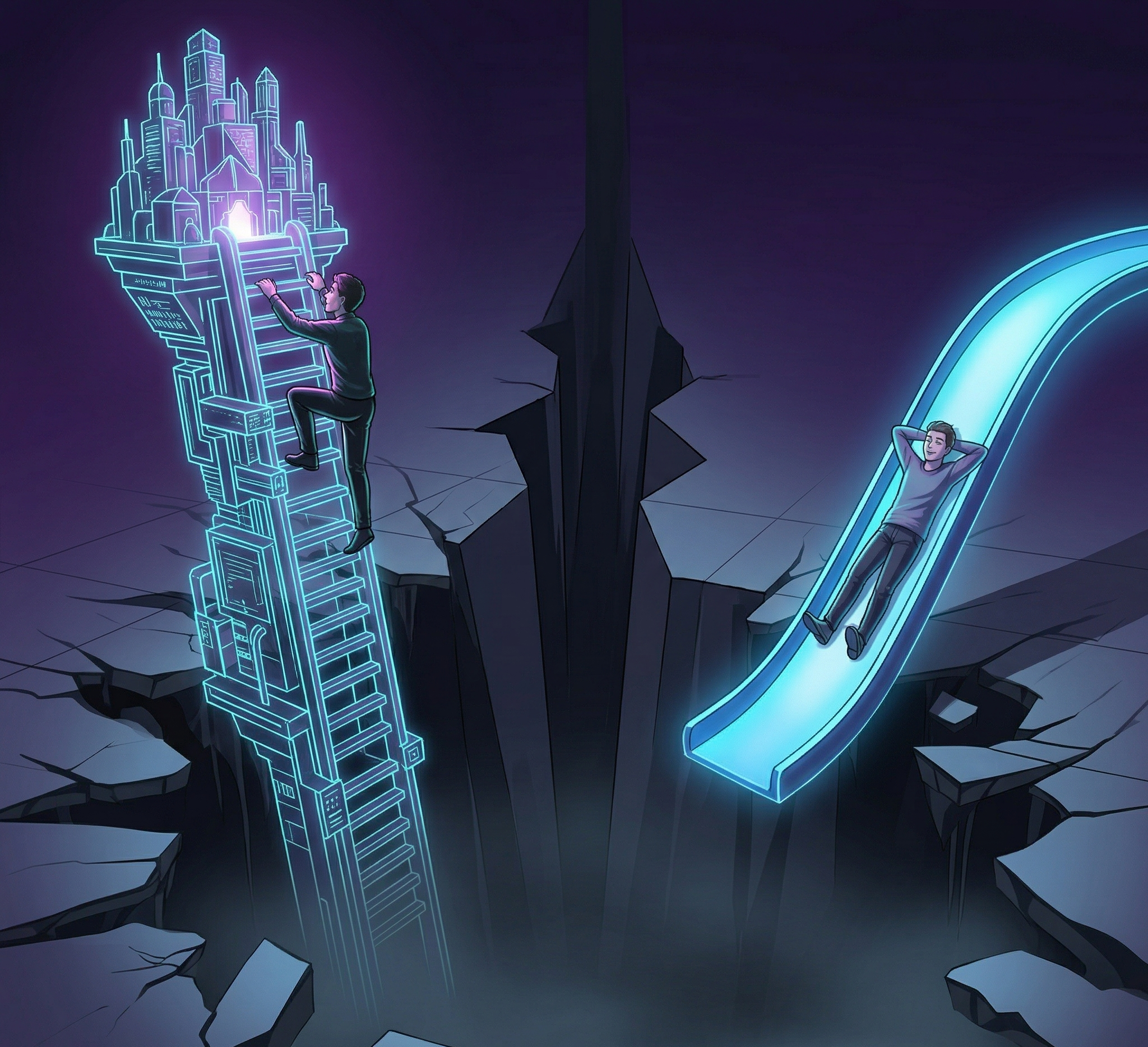

At the top end, the picture is extraordinary. People who already think rigorously are using AI to go further than they ever could alone - reading faster, synthesising across disciplines, pressure-testing their own arguments, exploring ideas at a depth that would have taken years before. The curious are compounding their curiosity. The sharp are getting sharper.

This is genuinely exciting. But it's only half the picture.

Because AI doesn't just amplify good thinking. It also makes it entirely optional.

For someone already inclined to skip the hard work of reasoning - to reach for the nearest available answer rather than sit with uncertainty - AI is the most elegant shortcut ever built. Fluent, confident, immediate. It doesn't just answer your question. It sounds like it really knows.

Researchers have started to document what happens next. A study of 666 participants across age groups found a significant negative correlation between frequent AI tool usage and critical thinking scores - with younger participants showing the highest dependency and the lowest scores. A separate Microsoft study of knowledge workers found the same pattern: the more people trusted AI, the less they trusted their own reasoning. The more they delegated, the less they engaged.

And here is where something quietly troubling begins.

The Floor Is Dropping

There's a concept in medicine called disuse atrophy. When you stop using a muscle - even a healthy one - it weakens. Not dramatically, not overnight. Just a slow, imperceptible decline that you don't notice until you need that muscle and it isn't there.

Researchers at MIT have begun applying this exact framing to cognition - what they call cognitive atrophy from AI dependency. But I think the academic literature undersells the social dimension of what's actually happening.

Because this isn't just about individuals making bad choices with their tools. It's about a structural pull - built into the design of the systems themselves - toward passivity. AI is optimised to satisfy. To remove friction. And friction, real frustrating uncomfortable cognitive friction, is where actual understanding lives.

When AI handles the reasoning consistently, frictionlessly, across years - the mental habits that make someone a good thinker don't stay static. They quietly erode. The capacity to tolerate ambiguity. The ability to hold two competing ideas and work through the tension. The instinct to ask why, not just what. These aren't fixed traits. They're practiced skills. And like all skills, they decay when left unused.

The floor, in other words, isn't holding steady. It's dropping.

The Cruelest Part: You Can't Feel It Happening

Here is what I find most worth sitting with.

Cognitive atrophy - unlike physical atrophy - comes with no pain signal. No limp. No visible symptom. In fact it comes dressed as its opposite: you feel more capable, because the output is better. Your emails sound sharper. Your arguments sound more considered. Your understanding feels broader.

One study captured this precisely: 83% of participants who used AI to write an essay could not remember the passage they had just produced. The output existed. The understanding did not.

The experience of thinking well and the experience of having AI think for you are becoming indistinguishable from the inside. This is unprecedented. Every previous shortcut had some friction built in. Calculators didn't make you feel like a better mathematician. Google didn't make you feel like you deeply understood what you'd just searched. But AI, precisely because it mimics the texture of real thought so convincingly, creates something new: the feeling of intellectual growth while the underlying capacity quietly contracts.

We've built something that makes it effortless to stop thinking - and effortless is seductive for everyone.

So What Are We Actually Talking About?

Not a story about smart people and dumb people. That framing is too easy, too uncharitable, and frankly too wrong.

This is a story about incentives and architecture. And about a gap that compounds silently. Researchers put it plainly: AI widens cognitive inequalities even as it democratises access to information. The tool is available to everyone. The benefit accrues to those who were already disposed to think carefully - because they're the ones using it to go deeper rather than to opt out.

The question worth asking isn't "is AI making some people dumber?" That's inflammatory and misses the point. The better question is: are we building a relationship with AI that exercises our thinking - or one that quietly replaces it?

Those are two very different trajectories. And right now, for many people, the path of least resistance leads clearly toward one of them.

I Don't Have a Clean Answer

I want to be careful here. I'm not arguing we should resist AI, or that there was some golden age of deep thinking we've lost. I'm not certain about most of what I've written here. These are observations, not conclusions.

But I do think this: the people who will thrive in an AI-saturated world are not necessarily those with the most access to the tools. They're the ones who remain stubbornly, almost irrationally committed to the process of thinking - who use AI to go deeper, not instead.

And the gap between those people and everyone else is widening faster than almost anyone is talking about.

That's the thing I can't stop thinking about.

What do you think?

A note on how this was written: this piece was developed in conversation with AI - as a thinking partner and sounding board, not a ghostwriter. The ideas, the arc, and the argument are mine. The irony is intentional.